When the new AI tools went live, the tech team called it a smooth rollout. Systems were up, licenses assigned, the vendor’s checklist was complete.

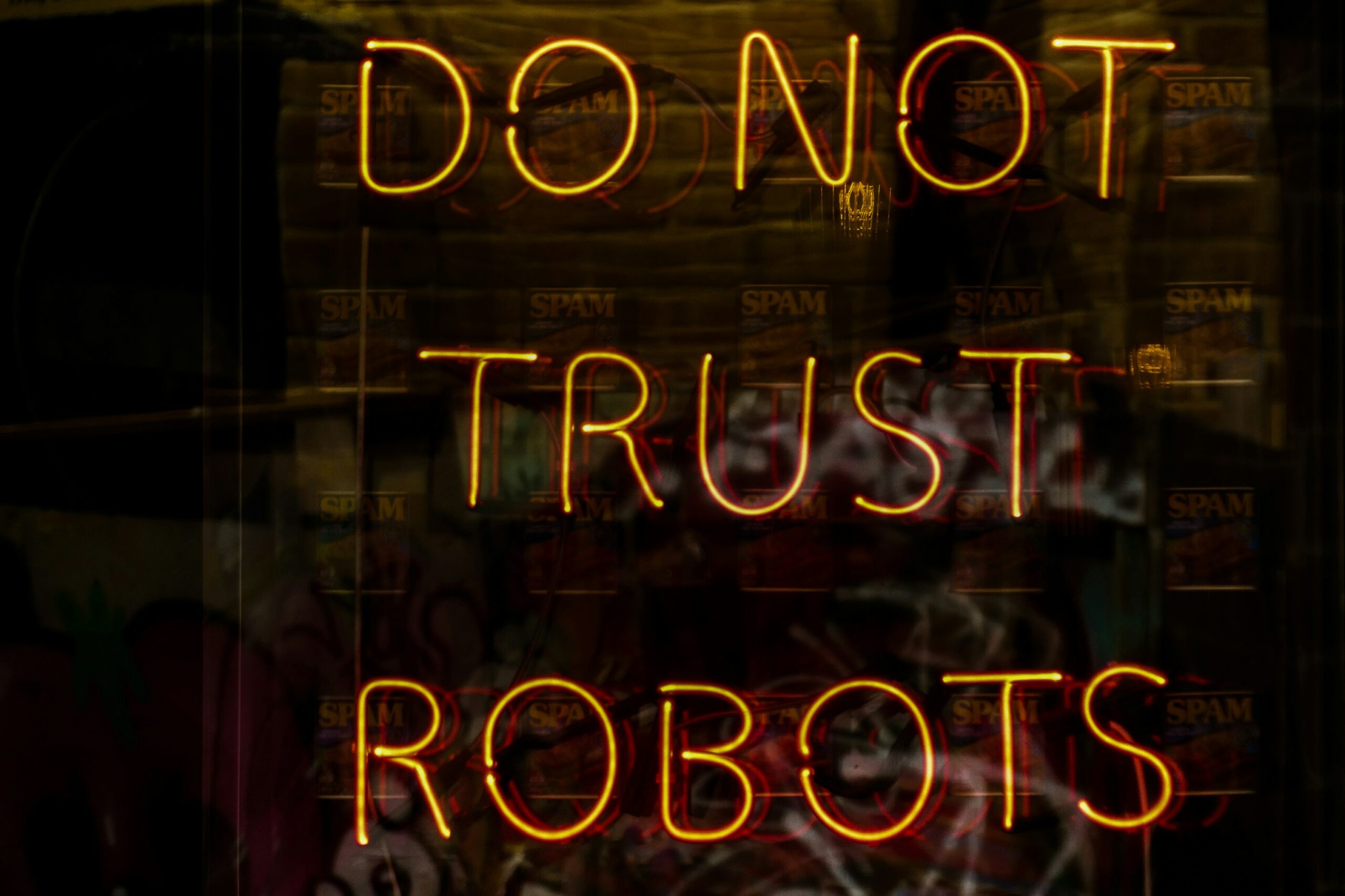

But in a climate where only 35% of people worldwide say they trust businesses to use AI responsibly (Edelman Trust Barometer 2024, Special Report on Trust in Technology), “working” isn’t the same as “trusted.” Two weeks later, HR was swamped:

-

A department head wanted to know if they had to use the AI tool to screen candidates.

-

A manager complained the scheduling algorithm ignored people’s availability.

-

Several employees were quietly asking whether the system was tracking their work in ways no one had explained.

The technology functioned as designed. Adoption was patchy. Trust was shaky. HR was translating a rollout they hadn’t led.

We’ve seen this across industries. When AI is introduced without enough attention to people, culture, and communication, Talent leaders inherit the fallout. From our Leading Ethical AI work, three steps help prevent it:

Readiness → Trust → Traction.

Step 1: Readiness — Start with the Reality Check

Readiness means mapping where people stand before AI arrives:

- Which teams are already experimenting with AI.

- Where resistance is likely to surface.

- What skills or understanding are missing.

A consumer goods company learned this too late. Headquarters staff had been casually using AI for months, but warehouse supervisors hadn’t touched it — and voiced strong doubts. When the AI scheduling tool launched, supervisors ignored it. A quick “AI literacy” and trust pulse beforehand would have flagged the gap, giving leaders a chance to adjust rollout pace and support.

Questions to ask:

- Who in the organization is already using AI, even unofficially?

- Who has never touched it — and why?

- What’s the one thing that could stop people from trying it?

Step 2: Trust — Build it Before You Need it

If employees see AI as unfair, opaque, or unchecked, they will work around it. Trust comes from:

- Agreeing on boundaries together — where AI will be used and where it won’t.

- Making human oversight explicit.

- Communicating in plain language.

At a manufacturing firm, leaders paused the rollout of an AI hiring tool after employees raised bias concerns. They brought managers and staff together to co-write “AI Ground Rules.” Debates got heated, but by the end, more people were willing to use the tool because they had helped set the guardrails.

Questions to ask:

- Where will people expect a human, not AI, to make the call?

- How will we show that AI results are being checked?

- Can we explain how the AI works in simple terms?

Step 3: Traction — Follow the Energy

The Traction Test — Clarity, Demand, Signal — helps decide if an AI initiative should grow:

- Clarity – People know what it does and why it matters.

- Demand – People are asking for it.

- Signal – People are already using it without being told.

A financial services firm launched two AI tools — a scheduling app and a proposal drafting assistant. After a month, usage logs told the story: the proposal tool was active daily; the scheduler wasn’t. They expanded the proposal tool and dropped the scheduler.

Questions to ask:

- Do people understand what this tool is for?

- Are they asking to use it, or do we have to push it?

- What proof do we have that it’s helping?

The takeaway:

When tech leads the rollout, Talent ends up managing the human side. The earlier HR and business leaders get involved in readiness, trust, and traction, the smoother AI adoption becomes — and the more likely employees are to see AI as a tool for them, not to them. Reach out to us to learn more about our Leading Ethical AI Series.

Source: Edelman Trust Barometer 2024, Special Report on Trust in Technology.